Zuckerberg defends inaction on Steve Bannon’s page

From CNN Business’ Kaya Yurieff

Last week, Zuckerberg told employees at a company meeting that Steve Bannon suggesting that Dr. Anthony Fauci and FBI Director Christopher Wray should be beheaded was not enough of a violation of Facebook’s rules to permanently suspend the former White House chief strategist from the platform, an employee told CNN.

“How many times is Steve Bannon allowed to call for the murder of public officials before Facebook suspends his account?” Blumenthal asked Zuckerberg. He also asked whether Zuckerberg would commit to taking down Bannon’s Facebook page.

Zuckerberg said “no” and “that’s not what our policies suggest that we should do.”

The Facebook CEO added that the company does take down accounts that post content related to terrorism or child exploitation the first time they do so. However, for other categories, Facebook requires multiple violations before an account or page is removed.

Bannon was permanently suspended from Twitter last week after making the comments in a video. But the video was live on Bannon’s Facebook page for about 10 hours last week and was viewed almost 200,000 times before the company removed it, citing its violence and incitement policies.

Google gets a pass

From CNN Business’ Rishi Iyengar

As Facebook and Twitter face questions around censorship and suppression, one other Big Tech rival — Google — is conspicuously absent.

“Google has been given a pass from today’s hearing,” Senator Richard Blumenthal of Connecticut said in his opening remarks. “It’s been rewarded by this committee for its timidity, doing even less than [Facebook and Twitter] have to live up to its responsibilities.”

Google CEO Sundar Pichai appeared at last month’s Senate Commerce Committee hearing alongside his Facebook and Twitter counterparts, Mark Zuckerberg and Jack Dorsey, but Google has often escaped (or avoided) the same level of scrutiny from lawmakers.

In fact, back in 2018, the Senate Intelligence Committee set up an empty chair next to Dorsey and Facebook’s COO Sheryl Sandberg with a placard for Google, in a swipe at the company’s refusal to offer up Pichai or another high-level executive to testify.

Blumenthal argued that the tech companies have only taken “baby steps” to combat harmful misinformation on their platforms.

Google-owned YouTube was criticized for not doing enough to deal with misinformation during the election, applying a far less aggressive strategy than Facebook or Twitter did.

The video platform placed an information panel at the top of search results related to the election, as well as below videos that talked about the election, but allowed some videos containing misinformation to stay online without labeling or fact-checking it.

Zuckerberg claims Facebook isn’t addictive

From CNN Business’ Brian Fung and Kaya Yurieff

Zuckerberg called claims that social media platforms have an addictive quality to be “memes and misinformation.”

“We certainly do not design the product to be that way,” Zuckerberg said. “We certainly do not want our products to be addictive.”

Graham said he is increasingly concerned by research suggesting that there may be a public health problem associated with social media, drawing parallels with the tobacco industry.

Zuckerberg pushed back on research saying, “I don’t think the research has been conclusive,” but added it’s an area the company “cares about.” For example, he said the Facebook team running the news feed isn’t provided with data on how much time people are spending on its products.

A challenging task

From CNN Business’ Brian Fung

When asked what he heard during the hearing’s opening statements, Jack Dorsey admitted that content moderation isn’t easy.

“We are facing something that feels impossible.”

Both executives agreed with Graham that the government should not be involved in setting out what content should be removed from tech platforms.“I think it would be very challenging,” Dorsey said.

Zuckerberg said: “For certain types of illegal content, it may be appropriate for there to be clear rules around that. But outside of clear harms including for things like child exploitation, terrorism, I would agree with your sentiment that’s not something government should be deciding on a piece of content by piece of content basis.”

Dorsey and Zuckerberg defend content decisions

From CNN Business’ Brian Fung

Dorsey and Zuckerberg opened their testimony by acknowledging questions about the way their companies handled political content, particularly surrounding the election.

Dorsey said he recognized that Twitter made a mistake in the way it handled the New York Post story about Hunter Biden, saying the company quickly moved to update its policies on hacked materials.

Zuckerberg said of the election, “from what we’ve seen so far, our systems worked well.”

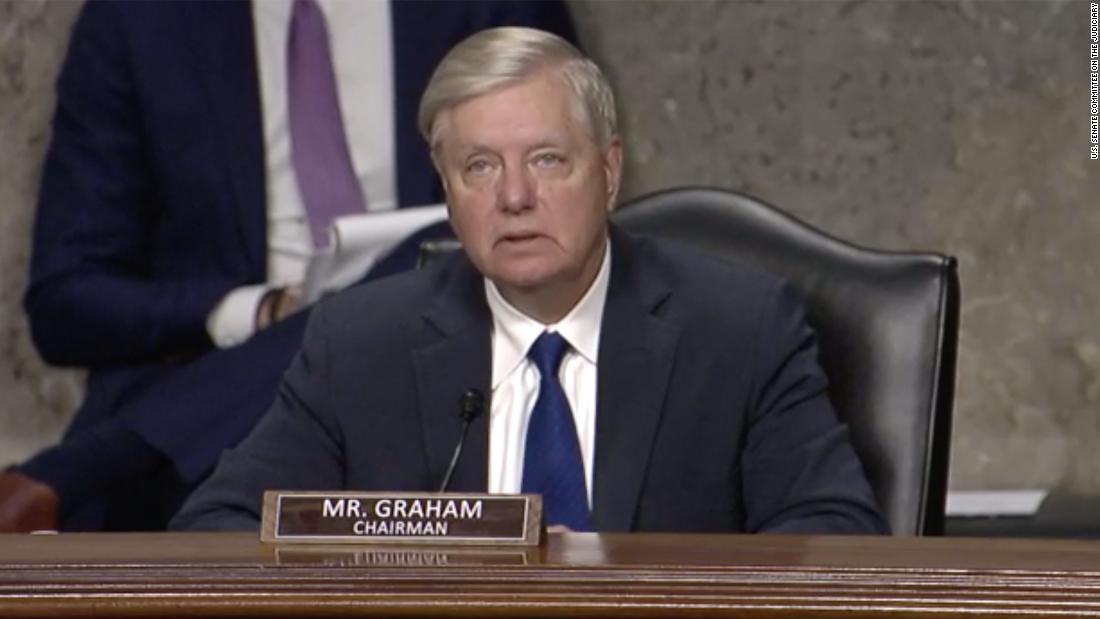

Graham gives accurate reading of controversial Section 230

From CNN Business’ Brian Fung

Here’s something worth noting: In his opening remarks, Graham described Section 230 in largely accurate terms. Normally a senator simply describing a provision of a law accurately wouldn’t be notable but when it comes to Section 230, it is, because of how often the debate over Section 230 updates is often based on misconceptions of the law and its purpose.

Graham’s opening remarks may show that he hopes the hearing will be perceived as a push for productive dialogue.

Sen. Lindsey Graham kicks hearing off: “Something has to give”

From CNN Business’ Brian Fung

Sen. Lindsey Graham began the hearing with a relatively impartial description of Section 230, correctly characterizing the law as having protected tech companies for their content decision-making. But he quickly pivoted to claims of anti-conservative censorship regarding an article by the New York Post about Hunter Biden.

Under the current law, “you can sue the person who gave the tweet, but you can’t sue Twitter that gave that person access to the world,” Graham said. He added, accurately, that exposing tech companies early on in their lifetimes to content lawsuits could have prevented great companies from coming into existence.

But then he said that tech companies enjoy enormous power rivaling governments and legacy media companies, referring to Facebook and Twitter’s decisions to suppress a viral but baseless story by the New York Post containing unfounded allegations about Joe Biden’s son, Hunter Biden.

“I don’t want the government deciding what content to take up and put down,” Graham said. He added: “When you have companies that have the power of governments, far more power than traditional media outlets, something has to give.”

How to watch today’s hearing

From CNN Business’ Kaya Yurieff

The hearing is underway. There are a few ways to watch:

- Tune into the Senate Judiciary Committee’s site.

- C-Span is livestreaming it here.

- CNN is also streaming the hearing in the top corner of this page.

Jack Dorsey touts Twitter’s content moderation around election

From CNN Business’ Brian Fung

Twitter CEO Jack Dorsey will tell senators that the company applied contextual warning labels to 300,000 tweets between Oct. 27 and Nov. 11, according to his prepared testimony.

Of those, the content of 456 tweets were also covered up by a warning message, Dorsey is expected to say. Those numbers were shared by Twitter in a blog post last week.

Twitter has witnessed a wave of misinformation as users including President Donald Trump and his allies have spread false and misleading claims about the election and its outcome. At one point, the social network applied warning labels to more than a third of Trump’s tweets after polls closed. Over last weekend, Twitter affixed a fact check label to more than 30 of his election-related tweets and retweets between Friday and Monday morning.

Dorsey will repeat several familiar themes from his testimony last month, including expressing a commitment to greater transparency around content moderation.

In response to proposals concerning Section 230, a federal law that grants websites legal immunity for curating the content on their platforms, Dorsey will warn that a repeal could lead to increased content removals and frivolous litigation while making it harder to address truly harmful material online.

In something of a jab at Facebook, Dorsey is also expected to oppose updates to technology laws that are done via “carve-outs” that he claims will “inevitably favor large incumbents” and “entrench” the most powerful tech companies.

“For innovation to thrive, we must not entrench the largest companies further,” Dorsey is expected to say.